Read the Full Series

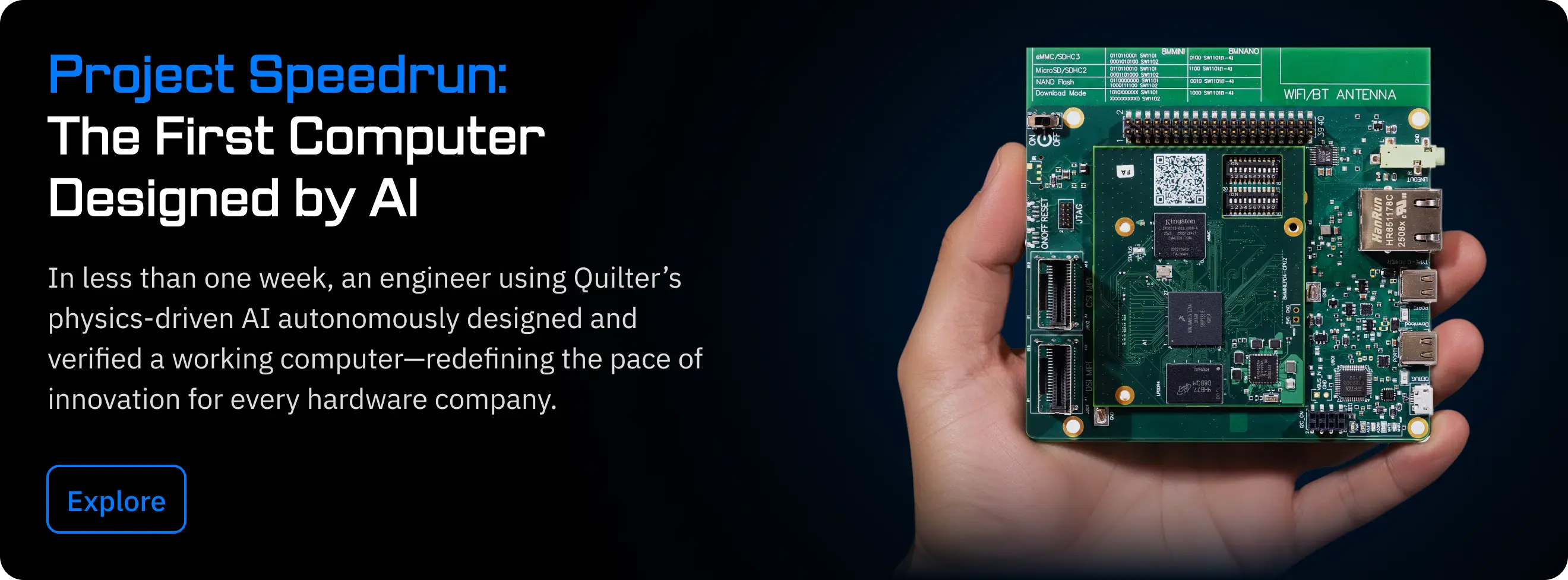

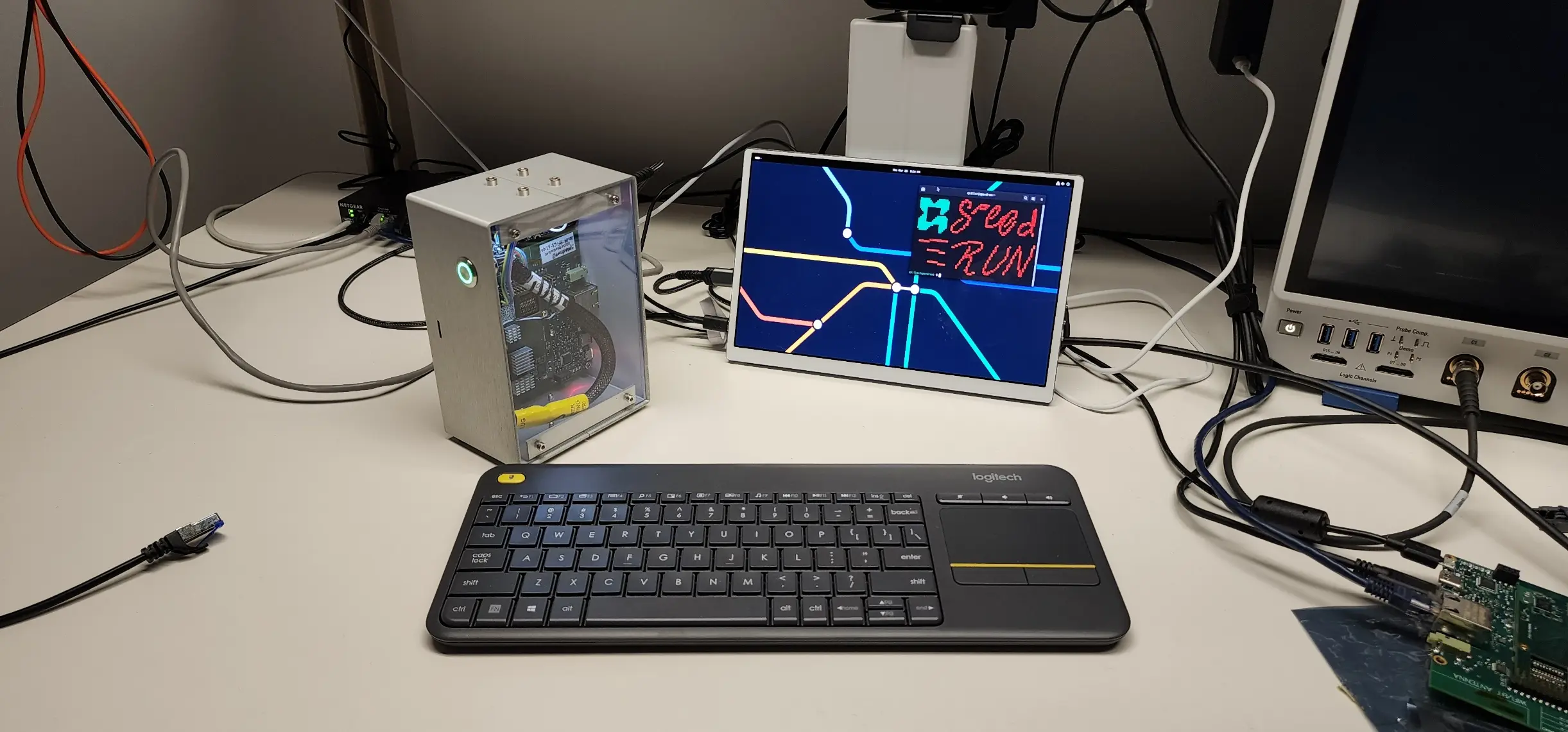

This article is one part of a walkthrough detailing how we recreated an NXP i.MX 8M Mini–based computer using Quilter’s physics-driven layout automation.

Choosing the right PCB design software isn’t just about features or brand names anymore. In 2026, engineering teams are rethinking value, moving from traditional per-seat subscriptions to outcome-based models powered by AI. Here’s how Quilter, Altium Designer, and Autodesk Fusion 360 Electronics compare, and why the most innovative teams are looking beyond license fees to measure true ROI.

One more reason this conversation is heating up right now: Autodesk has stated that EAGLE Premium is included with Fusion, and that after June 7, 2026, EAGLE will no longer be available or supported. (Autodesk) That one date forces many teams to revisit assumptions they made years ago.

Let's define what really matters when picking PCB software

If you’re evaluating a PCB software subscription in 2026, the best way to cut through the noise is to separate what you pay from what you actually get done.

First, the total cost of ownership (TCO) is rarely a license line item. The real spend is typically dominated by engineering time: floorplanning, constraint setup, routing, iteration, DRC clean-up, design reviews, and the “surprise work” that appears when a layout doesn’t behave the way you expected. Per-seat pricing hides this because it’s easy to approve a tool renewal while quietly paying for weeks of schedule slip and late nights. If you’re an engineering manager, your TCO model should include at least: (1) time-to-first-layout, (2) number of iterations you can afford to run, and (3) the cost of a respin when assumptions break at fabrication.

Second, feature access matters only when it removes risk or saves cycles. Advanced rules, high-speed constraints, differential pair routing, impedance control workflows, and MCAD collaboration can be table stakes depending on your product. But the question is: are these features locked behind tiers, add-ons, or extra platforms? For example, Altium’s platform pricing includes specific per-user capabilities like Advanced MCAD CoDesigner at USD 499 per user/year. (Altium) Those add-ons can be the difference between “we own the tool” and “we can actually collaborate the way our org works.”

Third, support and updates are part of what you are buying. Subscription models typically bundle updates, but the practical question is response time and escalation path when a tool blocks a release. Many teams underestimate the cost of slow support until they are midway through the bring-up.

Fourth, commercial restrictions and IP posture are not fine print. Some plans limit commercial use, collaboration scope, or the durability of your workflow when products are deprecated. Autodesk’s EAGLE timeline is a clear example of why “what happens in 18 months” belongs in your evaluation rubric, not just “what works today.” (Autodesk)

If you want a simple scoring lens, use this: How much design capacity does this subscription unlock per dollar, and how predictable is that capacity under deadline pressure?

How do leading plans from Altium, Autodesk, and Quilter stack up?

Here’s the cleanest way to answer the query “comparing subscription plans for PCB software” in 2026: compare the subscription unit each vendor sells you.

- Traditional EDA usually sells a seat (a named user or author) and optionally offers collaboration, integrations, or additional capabilities.

- AI-driven layout can sell an outcome (a completed, approved, downloadable layout) and let you iterate without punishing your org chart.

Side-by-side comparison table (2026 view)

Category

Altium (Designer + platform services)

Autodesk Fusion 360 Electronics (EAGLE via Fusion)

Quilter (AI PCB layout)

Subscription unit

Typically per user/seat, often paired with platform services

Per user subscription to Fusion, with electronics workflow access via Fusion

Outcome-based: pay for approved designs, not seats

Typical license structure

Author seats and workspaces are priced per year in some offerings (example: Develop workspace and author pricing) (Altium)

Autodesk Fusion price is listed as $680 paid annually (and other terms) (Autodesk)

“Explore freely, pay only for approved designs”; pricing scales by pin count, not seats (quilter.ai)

Electronics roadmap risk

Generally stable, but packages and entitlements vary by plan

EAGLE included with Fusion; Autodesk says EAGLE will no longer be available nor supported after June 7, 2026 (Autodesk)

Model designed for iteration without per-seat friction; “unlimited iterations” messaging appears on Quilter free tier (quilter.ai)

Collaboration and add-ons

Platform pricing includes per-user add-ons like Advanced MCAD CoDesigner (USD 499 user/year) (Altium)

Strong ECAD-MCAD story inside Fusion ecosystem (CAD + electronics workflows) (Autodesk)

Browser-based review plus CAD handoff in native formats is core positioning (see workflow section below)

Value metrics

Cost per seat, regardless of how many layouts you finish

Cost per seat regardless of iteration count

Cost per successful layout (approved design download) (quilter.ai)

Best fit (common pattern)

Teams deeply invested in traditional EDA flows and tight control of authoring seats

Teams that want a unified mechanical + electronics environment, mindful of the EAGLE timeline (Autodesk)

Teams optimizing for iteration speed, design throughput, and “design capacity” without adding headcount (quilter.ai)

Quick take: If your bottleneck is “we don’t have enough people licensed to do layout,” per-seat subscriptions feel natural. If your bottleneck is “layout takes too long, and we cannot afford the iteration,” outcome-based pricing starts to look like a different category of tool.

What’s meaningfully different about Quilter in this comparison

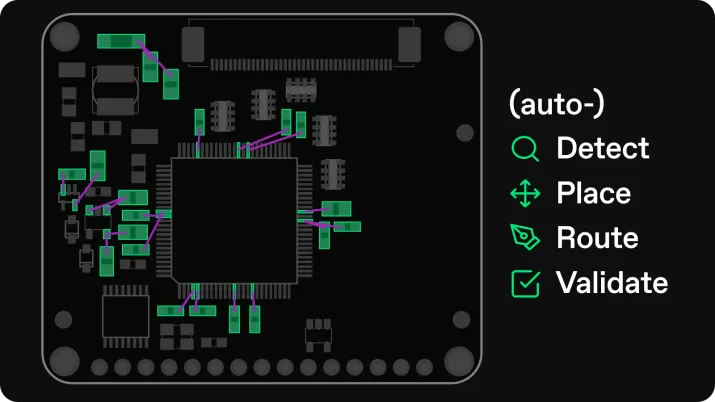

Quilter’s positioning is not “another CAD seat.” It’s closer to “design capacity on demand.” On the Quilter site, the framing is explicit: explore freely, pay only for approved designs, with pricing that scales by pin count, not by seats, so that organizations can iterate broadly. (quilter.ai) Quilter’s workflow messaging is designed to slot into existing toolchains, supporting uploads from major EDA ecosystems and returning files in the same format as submitted, while emphasizing physics-aware review and transparent constraint evaluation.

That one shift changes how you plan staffing, how you budget iteration, and how you forecast schedule risk.

Here's why 'cost per successful layout' changes the value equation

Most engineering leaders already know that “license cost” is the least interesting number in the room. The problem is that most subscription models still force you to budget as if licenses are the bottleneck.

Traditional subscriptions optimize for occupancy, not throughput

With per-seat pricing, you pay a fixed annual rate per designer or author seat. That can work well when:

- work is steady and predictable,

- layout demand matches headcount,

But it penalizes the exact behavior that creates better boards: trying more alternatives. If spinning an alternate placement strategy means waiting for the one person with a seat, you are not really measuring cost per seat. You are measuring cost per blocked decision.

Autodesk Fusion’s subscription is priced with a precise annual amount on its overview page (for example, $680 annually, with other terms listed as well). (Autodesk) That’s a perfectly reasonable way to price a broad platform. The key question is whether that subscription unit aligns with how your electronics actually work, especially given the EAGLE availability timeline after June 7, 2026. (Autodesk)

Quilter’s model aligns spend to output

Quilter’s published pricing framing is the opposite: explore freely, pay only for approved designs. (quilter.ai) In practice, that means the economic unit becomes something closer to “a finished, downloadable layout you chose to ship,” rather than “who had a seat.”

This is where cost per successful layout becomes a valuable management metric:

- Cost-per-seat answer: “How many people can open the tool?”

- Cost per successful layout answers: “How many boards did we actually get to a manufacturable state, how fast, and with how many iteration cycles?”

When you reframe the metric, you start to see why outcome-based pricing can change behavior:

- Engineers can explore more layout options without requiring additional seats.

- Hardware teams can treat layout as an “abundant resource” rather than a scarce specialty.

- Budgeting becomes tied to program output, not organizational structure.

Mini-case study: one project, two budgeting styles

Let’s use a realistic scenario that shows why this matters.

Scenario: A small hardware team is building a compute-adjacent product with a medium-complexity PCB. They expect:

- 1 initial layout,

- 2 major revisions (placement changes, connector move, power integrity tweaks),

- 3 minor revisions (DFM polish, silkscreen, mechanical tweaks),

- and at least one parallel experiment (alternate form factor).

That is 7 meaningful layout cycles in a short window.

Per-seat world: The cost you see is the annual subscription fee (s). The cost you cannot see is the queue: who can do layout work this week, and which slips are affected. If you purchase additional seats to reduce the queue, you are committing to recurring spend, even if the surge is temporary.

Cost-per-layout world: You can budget explicitly for the number of “approved, downloadable” outcomes you expect to produce, while allowing your org to generate and review candidates freely during exploration. Quilter’s pricing page messaging highlights this idea: capacity scales without limiting iteration based on seat count. (quilter.ai)

What changes operationally: Engineering managers stop asking “Do we have enough licenses?” and start asking “How many iterations can we afford, and which iterations de-risk the program the most?” That’s the ROI conversation you want in 2026.

What results can you expect with AI-driven PCB layout?

If you are evaluating “AI PCB layout” seriously, you should demand clear answers in three categories: speed, quality, and team impact.

1) Speed: moving from serial layout to parallel candidates

Quilter’s positioning is that you can generate multiple candidates quickly and iterate fast, with an emphasis on accelerating layout cycles and enabling more design exploration. Combined with its pricing stance (explore freely, pay only for approved designs), the intended result is not merely “faster routing,” but a workflow where you can run more experiments without turning every experiment into a budget negotiation. (quilter.ai)

Featured-snippet style answer: AI-driven layout is most valuable when it turns layout iteration from a weeks-long bottleneck into a same-day feedback loop, letting teams test more floorplans and constraints before committing to fabrication. (quilter.ai)

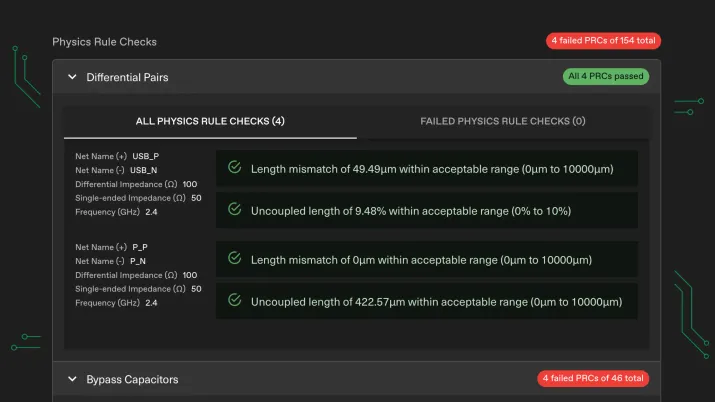

2) Quality: physics-aware review and fewer unpleasant surprises

Speed is meaningless if it produces boards that fail in bring-up. Quilter’s messaging emphasizes physics-aware design review and transparent evaluation against provided physical constraints, which is the right direction for teams that care about fewer respins. The practical expectation to set internally is not “AI means perfect boards,” but “AI can reduce common layout failure modes and compress iteration time so issues surface earlier.”

3) Team impact: increasing engineering bandwidth

This is usually the biggest win. When layout throughput increases, your senior engineers can spend more time on high-value work: architecture decisions, constraint definition, review, test planning, and the complex trade-offs that actually differentiate products.

Quilter explicitly frames this as increasing engineering bandwidth by freeing designers and engineers to focus on complex tasks, while generating candidates quickly. That matters because the best teams do not want to remove humans from the process. They want to remove waiting from the process.

CTA: Try an AI-driven workflow on a real board

Want to see what “cost per successful layout” looks like for your team?

Run a pilot on a real project, compare iteration speed, and request a value analysis aligned with your pin count and layout requirements. (quilter.ai)

If you want a copy-paste button/banner for your CMS, here’s a simple HTML snippet you can use (links included):

<div style="border:1px solid #ddd;padding:16px;border-radius:12px;">

<strong>Ready to test AI PCB layout on a real project?</strong>

<p style="margin:8px 0 12px 0;">

Upload a design, explore candidates, and pay only when you approve a downloadable layout.

</p>

<a href="https://www.quilter.ai/free-ai-pcb-design" style="display:inline-block;padding:10px 14px;border-radius:10px;text-decoration:none;border:1px solid #111;">

Try Quilter Free

</a>

<a href="https://www.quilter.ai/pricing" style="display:inline-block;margin-left:10px;padding:10px 14px;border-radius:10px;text-decoration:none;border:1px solid #111;">

View Pricing

</a>

</div>

Here's what to consider before switching your PCB design workflow

Switching workflows is not just about changing software. It’s changing how decisions are made, how reviews are conducted, and how iteration is funded. Here’s what to pressure-test before you commit.

1) Workflow compatibility and file handoff

Your team has existing investments: libraries, constraints, CAD conventions, and review rituals. A modern tool must respect that reality. Quilter’s workflow positioning is built around working with existing ecosystems and returning files in the same format you submitted, so teams can still run DRC, polish, and generate fabrication outputs inside their primary CAD environment.

If your process includes strict internal sign-off, that “return to native tool” step is crucial.

2) Learning curve: define who does what

AI-driven layout changes responsibilities. In many teams:

- Senior engineers own constraints, architecture, and review.

- Designers own implementation, cleanup, and manufacturing prep.

With AI in the loop, your best outcome often comes from re-centering humans on defining and reviewing constraints, then using AI to generate candidates. Your onboarding plan should explicitly define:

- who sets constraints,

- who validates candidates,

- and when you convert a candidate into an approved, downloadable design.

That clarity prevents confusion and makes pilot results comparable to your existing baseline.

3) Support, security, and organizational adoption

Traditional platforms typically have well-understood enterprise procurement patterns. With newer models, evaluate:

- response expectations when deadlines hit,

- how access is granted across teams,

- and how you keep IP handling aligned with policy.

Also consider roadmap stability. Autodesk’s EAGLE availability after June 7, 2026, is a reminder that platform direction can shift, and your workflow should include an exit plan, not just an onboarding plan. (Autodesk)

4) How to run a pilot that actually proves something

A good pilot is not a toy board. Pick a project that has:

- real constraints,

- at least one known pain point (dense connector region, sensitive power, mechanical constraints),

- and a deadline that matters.

Then measure outcomes with the same discipline you apply to firmware or test:

- time-to-first-candidate,

- number of iterations reviewed,

- issues caught before fabrication,

- and total engineering time spent to reach “ready for fab.”

If you want a single decision rule: run the pilot until you can estimate the cost per successful layout for your team and compare it to your current “seat cost plus schedule drag” reality.